Too lazy to sign your PowerShell scripts? Yes of course it provides security benefits but performing the steps manually can be easily forgotten and re-signing needs to happen after every script change. Because I like CI/CD topics and have not found a solution on the internet I decided to build a solution based on Azure capabilities. Furthermore, I wanted a solution which does not require to hand out the code signing certificate to the respective script author which can be useful if you have a bunch of people writing PowerShell scripts.

From a personal perspective, I would also recommend signing scripts you hand over to customers to ensure the integrity of the scripts because as soon as the script gets changed the signature is invalid.

You can find more general recommendations about script signing in the PowerShell docs.

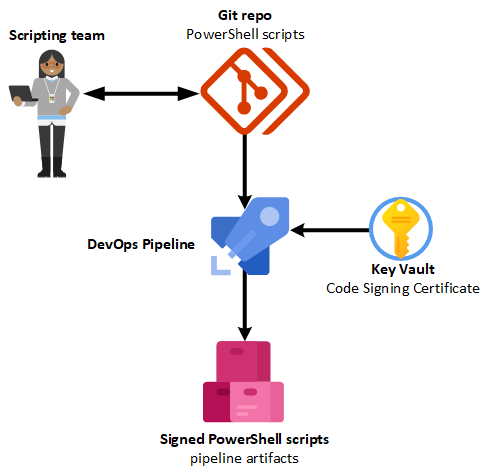

Solution overview

The key vault will store the code signing certificate with an access policy that allows access from the Azure DevOps pipeline.

The pipeline consists of the following steps:

- Import code signing certificate

- The certificate is supplied as secret in a variable group which is linked to the key vault

- Access to the key vault is granted with a service connection (service principal)

- Sign PowerShell scripts which contain a “magic token”

- All

*.ps1within the attached repository will be enumerated - To control which scripts will be signed only those with the “magic token” get processed

- The “magic token” gets removed before signing the script

- All

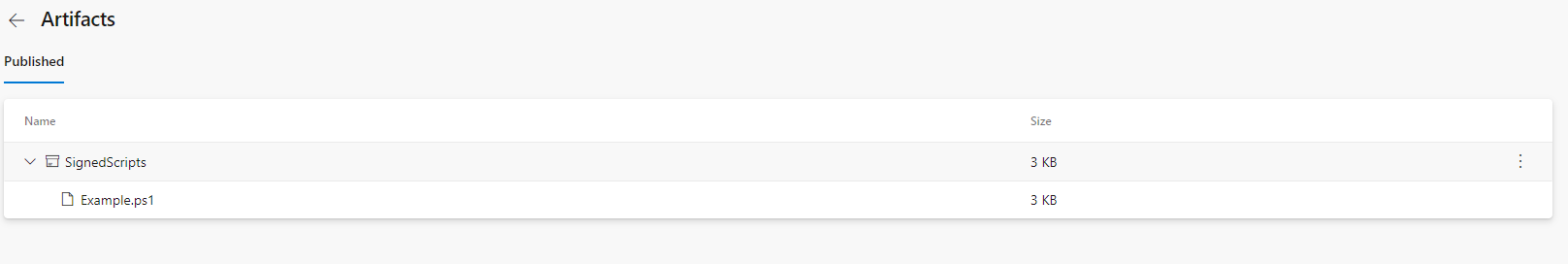

- Publish signed PowerShell scripts as pipeline artifacts

- To make them available for download

This solution has the advantage that you don’t need to hand out your code signing certificate to your team which authors the scripts and you have an automated and stable way to sign your scripts including a clear history of the scripts which were signed.

If you want to have a look at the pipeline configuration you can find it on my GitHub account. I added all the PowerShell as inline scripts but you could also convert them to external scripts if you want to run additional pipeline steps and maintain a cleaner YAML.

To store a certificate from a key vault directly in a certificate store without creating any files I found the following overload for the X509Certificate2.Import() method which accepts a Byte[] array with X509KeyStorageFlags to ensure that the private key gets imported as well:

Import(Byte[], SecureString, X509KeyStorageFlags) docs

| |

In the pipeline, we won’t use the Get-AzKeyVaultSecret cmdlet because we access the key vault with a variable group that has a reference to the key vault.

{: .notice}

To process all PowerShell scripts in the attached repository I added the following code and used #PerformScriptSigning as “magic token” to identify the scripts I want to sign.

The signed scripts are copied to the $env:Build_ArtifactStagingDirectory which is available within the pipeline to store artifacts we want to publish.

| |

One very small but important detail is to use the TimestampServer parameter for the Set-AuthenticodeSignature cmdlet because if you use a timestamp server the signature remains valid even after your code signing certificate has expired.

{: .notice}

You can find the full pipeline configuration here which includes the mentioned PowerShell snippets optimized for the pipeline.

Prerequisites

- Code Signing Certificate

- Azure Key Vault

- Git repo with your PowerShell scripts

- You can also host your repo directly on Azure DevOps

- Azure DevOps for the pipeline

- Free trial for up to 5 contributors is available

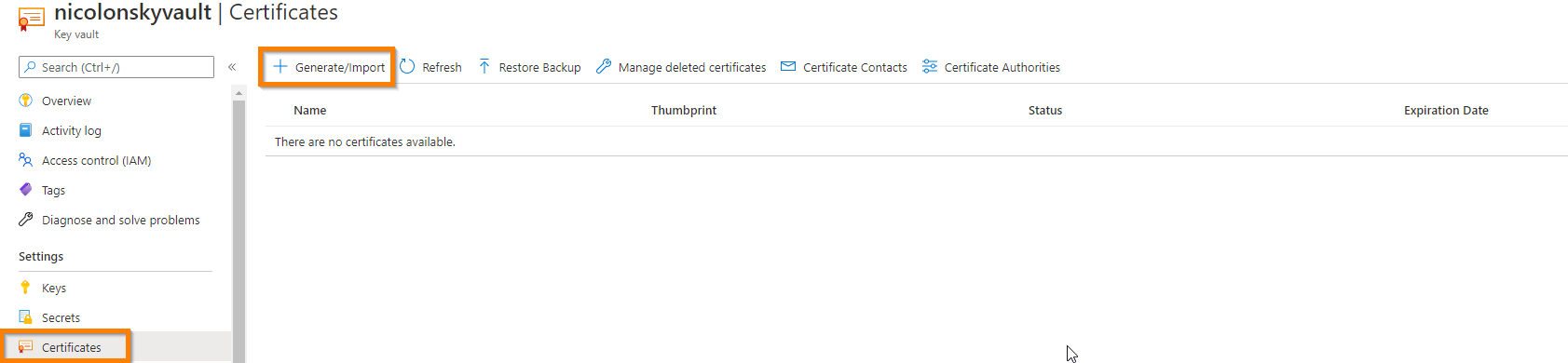

Key vault

Upload your PFX code signing certificate to the key vault under the certificate tab and choose a descriptive name:

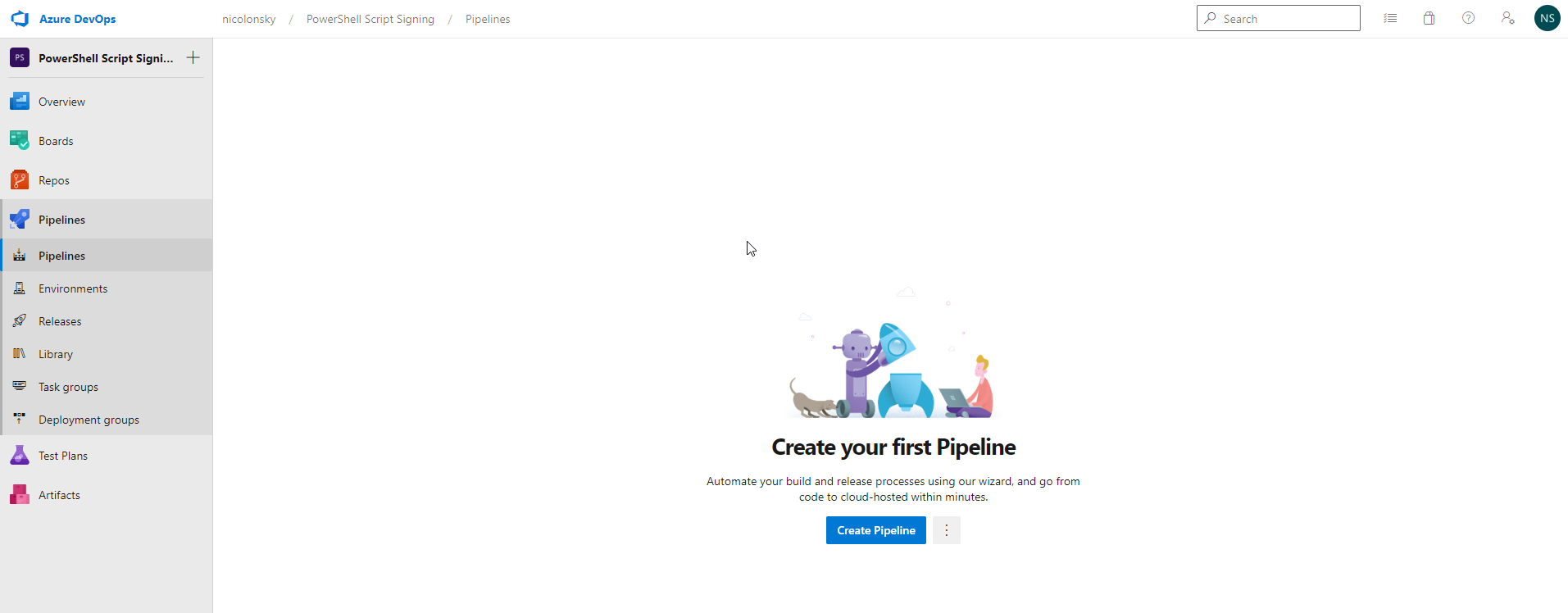

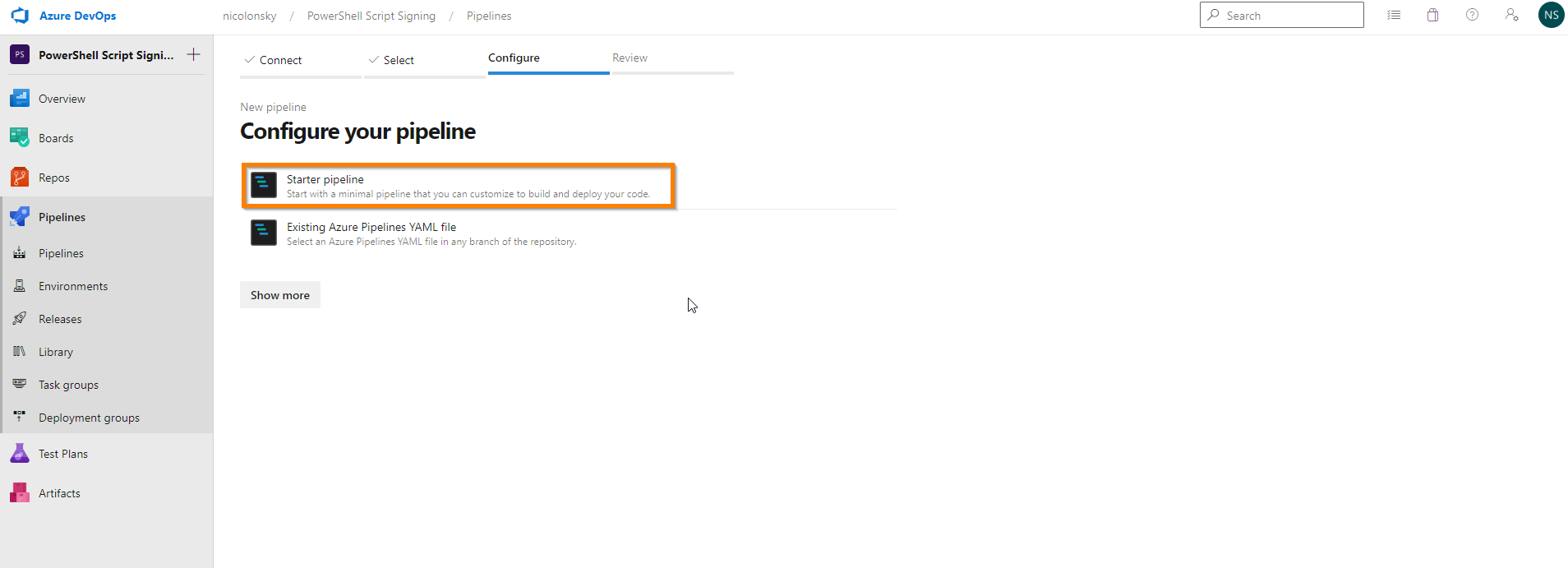

Azure DevOps Pipeline

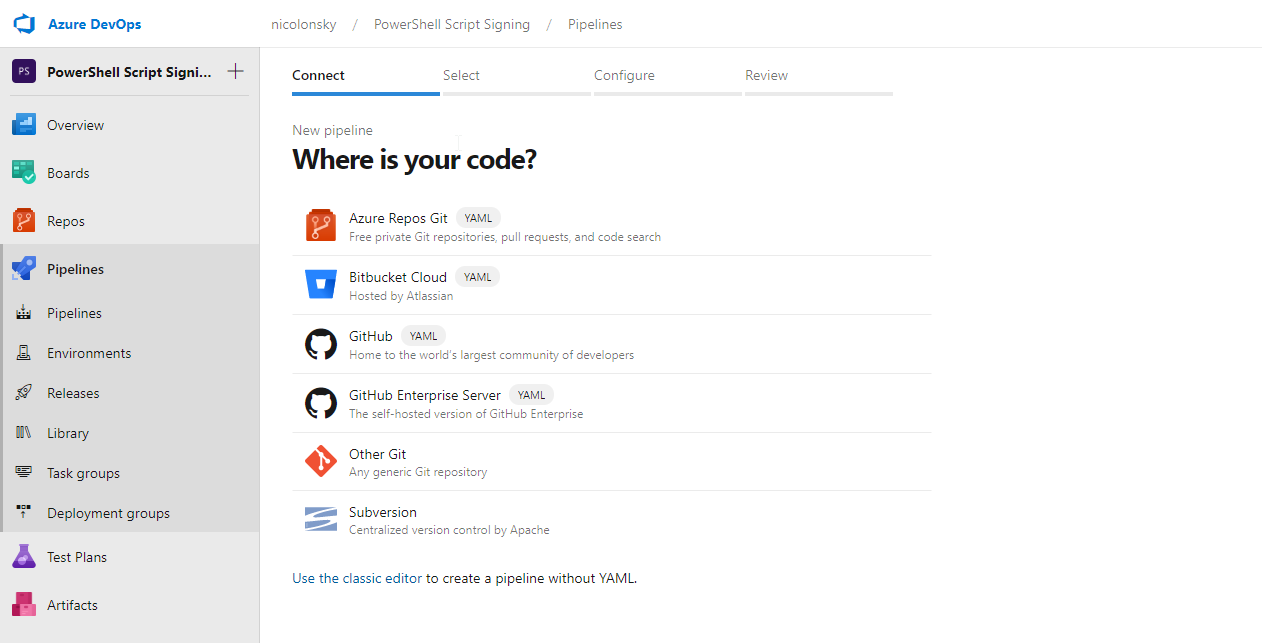

Optionally you can create a new Azure DevOps project or use an existing one.

Select repo which contains the PowerShell script (if you want to use the default Azure DevOps repo make sure to initialize it first)

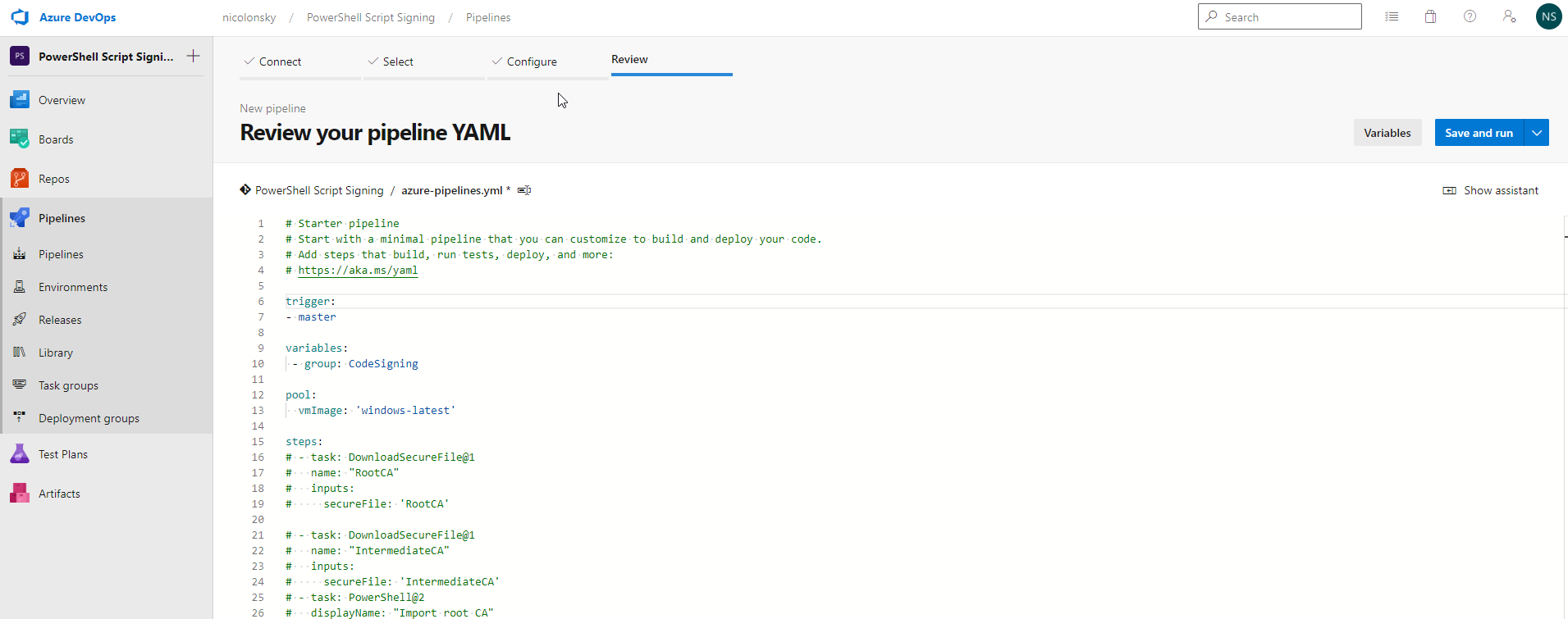

Replace the script with the following YAML content and save the pipeline

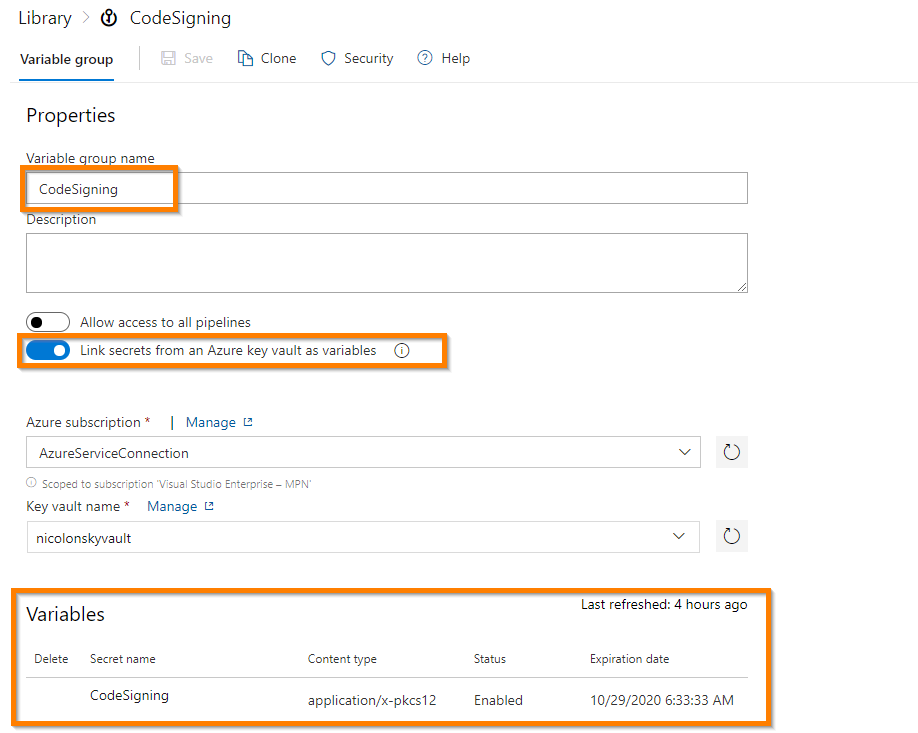

Variable Group

We now connect our variable group (can be found under the library submenu) to the key vault which holds the code signing certificate. This allows us to retrieve the certificate from the pipeline. If you didn’t adjust the YAML file you should use CodeSigning as the variable group name because it’s referenced within the pipeline configuration.

To access the key vault you need to select the subscription and resource group and hit the “Authorize” button which will create a new service principal in the background for Azure DevOps to interact with Azure resources.

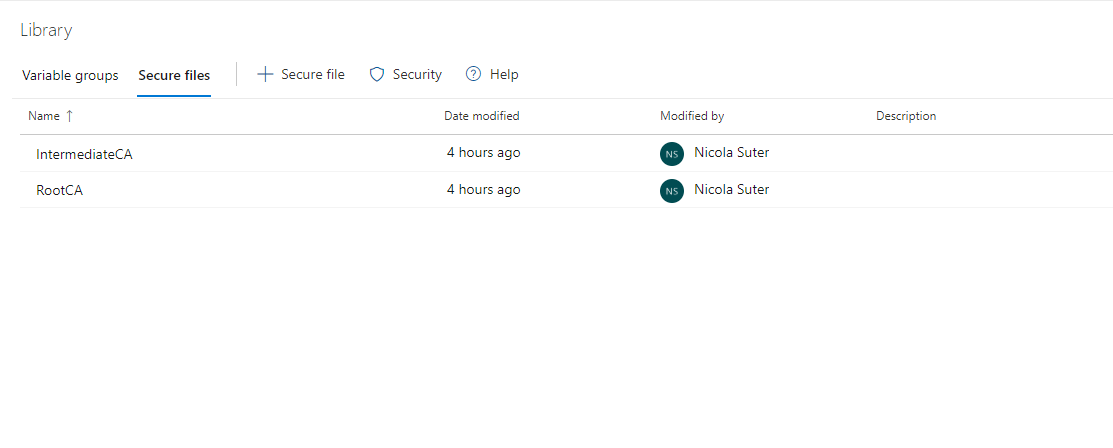

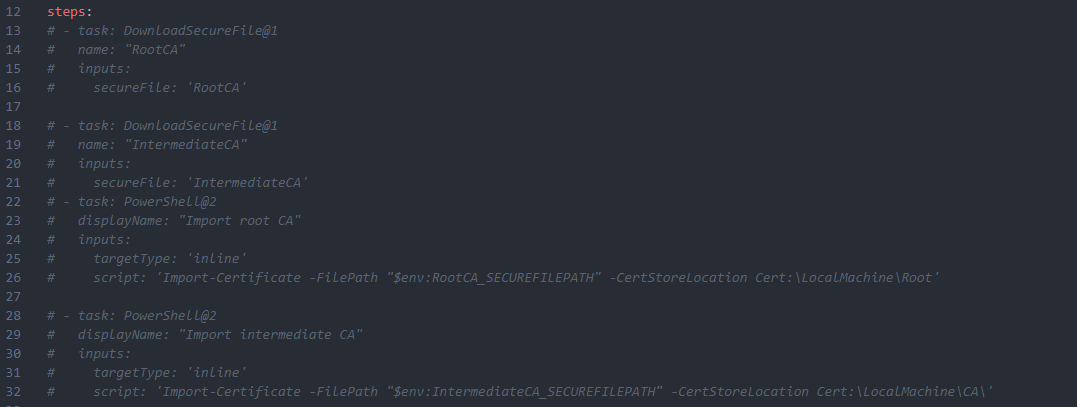

Intermediate and root CA certificates

If your code signing certificate requires an intermediate ca / root ca certificate you can add them as secure files.

Name them RootCA and IntermediateCA and uncomment the section in the YAML file to import those in the pipeline. The PowerShell signing Process requires a valid certificate chain.

Upload optional root CA & intermediate CA public keys as secure files

Uncomment the following section of the pipeline which installs the certificates on the agent:

The solution in action

If you want to sign a script add the following token to the script #PerformScriptSigning (preferably within the first few lines):

| |

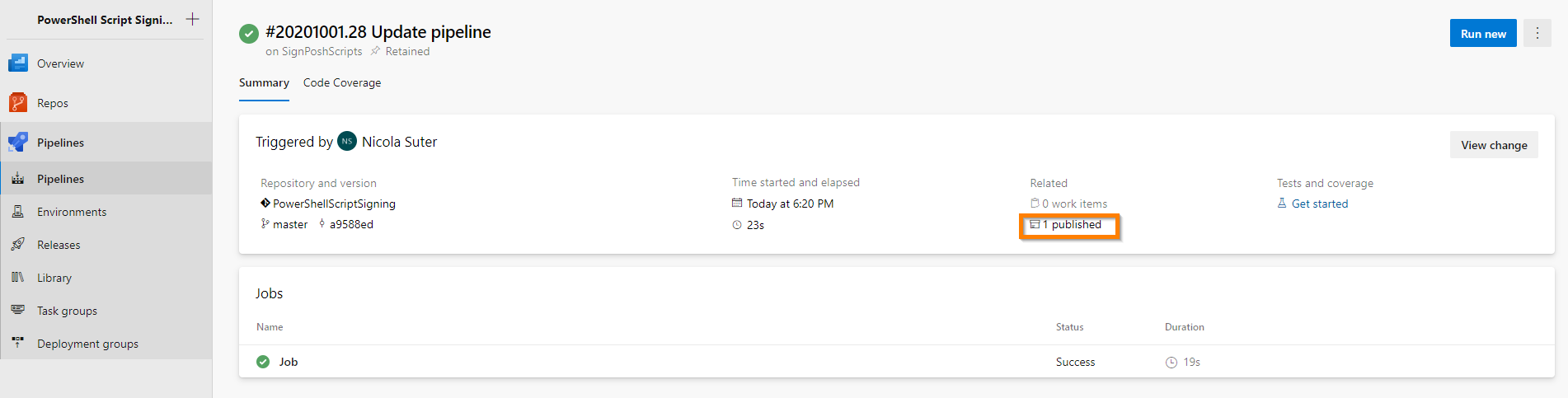

After you committed & pushed your changes with git the pipeline will start and perform the certificate retrieval and signing process.

You can find and download the signed scripts under the pipeline artifacts:

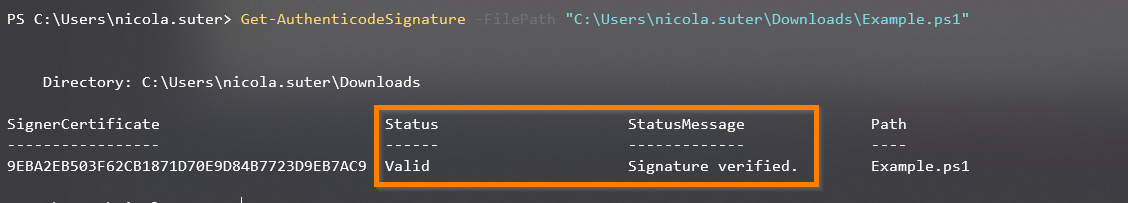

Testing the signature can be done with PowerShell and the Get-AuthenticodeSignature cmdlet:

As the pipeline runs automatically on every pushed commit it’s quite easy to always use and deliver signed scripts for your future projects. Hope this saves you some time an encourages you to sign your PowerShell scripts.